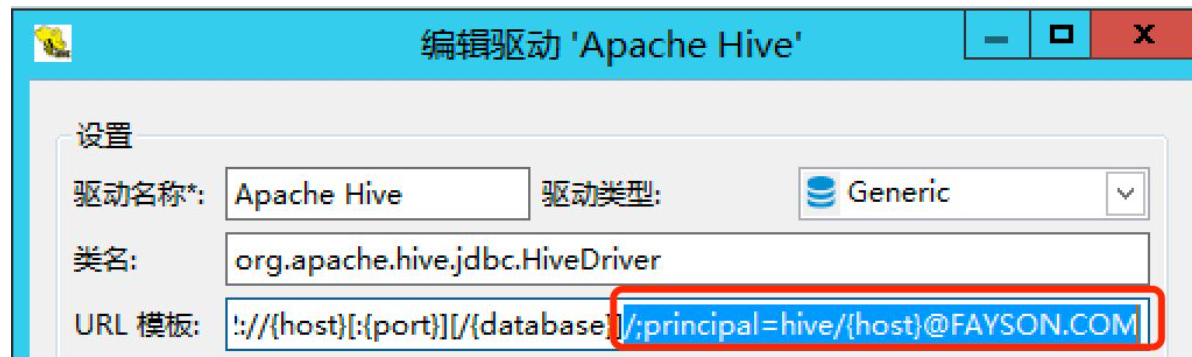

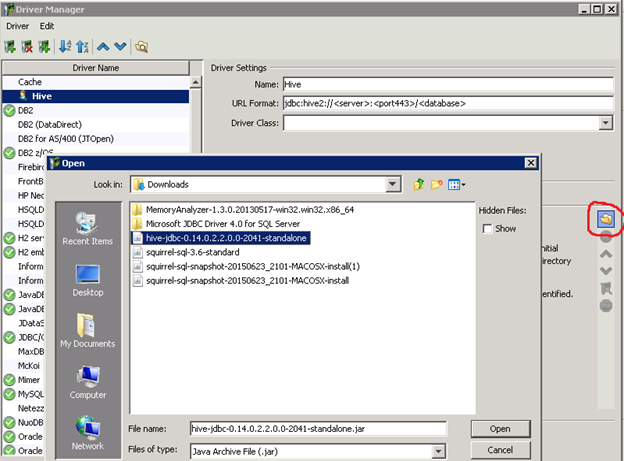

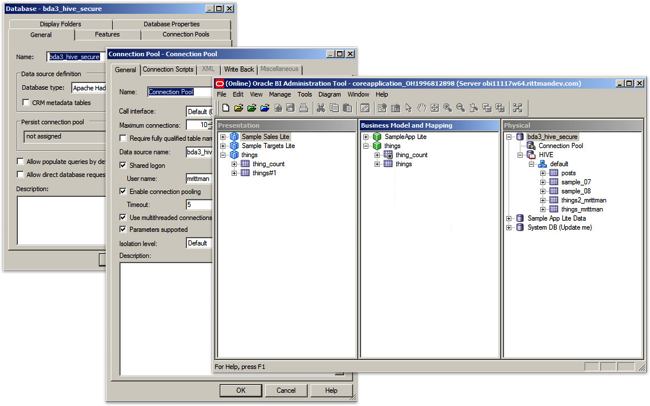

Start DbVisualizer by opening the following: DbVisualizer. You can find more information about Kerberos configuration here. All files are contained in an enclosing folder named DbVisualizer Unpack the tgz file in a terminal window with the following command or double-click it in the Finder: open dbvismacos.tgz.drvH <- JDBC(driverClass = "4.HS2Driver",ĬlassPath = normalizePath(list.files("Drivers/Cloudera-Simba/JDBC4/", pattern = ".jar$", full.names = T, recursive = T)),ĬonnH <- dbConnect(drvH, "jdbc:hive2://:10000 AuthMech=1 KrbRealm=YOUR_REALM.COM KrbHostFQDN= KrbServiceName=hive") Step 6 : Run following R code with appropriate host name etc configured in the JDBC Url. Step 5 : One important thing is that if you have Sentry governing Cloudera Cluster, make sure to login and run following R code from that user who have desired access level.   Step 4 : Once you have active ticket place all jars unzipped in Step 2 in a folder. If the ticket is not present generate it Step 3 : issue command klist in terminal and see that there is a ticket active. Step 2 : Unzip and use only JDBC 4 connector API. HIVE : DbVis Software How can we help you today Solution home Database Specific HIVE Hive: Connect to Apache Hive/Hadoop To install Hadoop and Hive ,see Apache Hadoop and Apache Hive To setup a connection with Hive/Hadoop in DbVisualizer do as follows : In DbVisualize. In the Create - Server dialog box, enter a name on the General tab to identify the server in pgAdmin. On the Dashboard tab, choose Add New Server. Step 1 : Download latest JDBC driver from Cloudera. To use pgAdmin to connect to PostgreSQL with Kerberos authentication, take the following steps: Launch the pgAdmin application on your client computer. To get the JAR files, install the Hive JDBC driver on each host in the cluster that will run JDBC applications. In the Driver Class menu, select the ApacheHiveDriver class,. The same driver can be used by Impala and Hive. In the User Specified tab, click the Open File button and select the file, located in the lib subfolder of the installation directory. The driver consists of several JAR files. We plan to support Kerberos based authentication with the next release of the KNIME Big Data Connectors in July. If your cluster is secured with LDAP you simply have to enter your user name and password for LDAP in the Hive/Impala Connector dialog. I am able to connect using following code. Install the Hive JDBC driver ( hive-jdbc package) through the Linux package manager, on hosts within the CDH cluster. the KNIME Big Data Connectors support LDAP authentication to connect to a secured cluster. MIT Kerberos for Windows 3.2.2 MIT Kerberos for Macintosh 5.0 Available as part of Mac OS X 10.3 Kerberos Extras for Mac OS X 10.2 and later Enables support of CFM applications to access the bundled Kerberos in Mac OS X 10. I have access to a Kerberos enabled cluster at my workplace.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed